PostgreSQL is a common fit for SaaS products because it supports several viable multi-tenant models without forcing an early infrastructure split. A small team can start with shared tables and tenant_id, then evolve toward schema-per-tenant or database-per-tenant for customers that need stronger isolation. The difficult part is not choosing the most isolated model on paper. It is choosing the model whose operational cost matches the product, the customer base, and the expected failure modes.

The central trade-off is simple: shared data models are easier to operate at first, while isolated models make customer-level recovery, access control, and noisy-neighbor management easier later. PostgreSQL gives you enough tools to build either, but it will not protect you from unclear tenancy boundaries, weak backup design, or queries that silently scan across tenants.

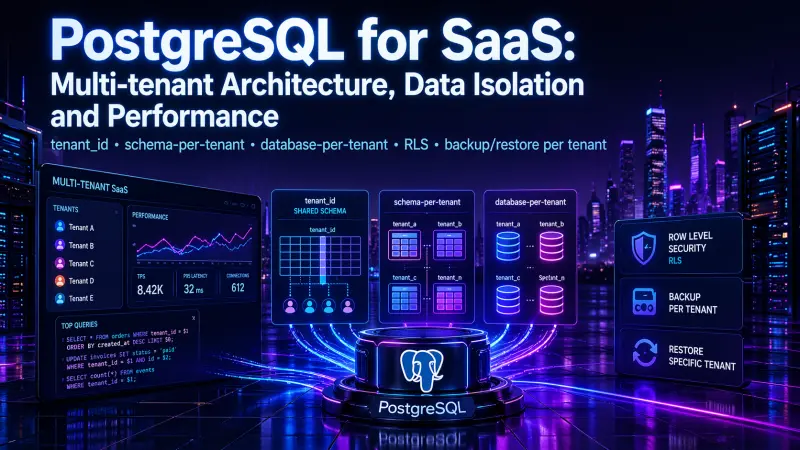

The Three Common SaaS Tenancy Models

Most PostgreSQL SaaS systems use one of three models:

Shared tables with a

tenant_idcolumnSeparate schema per tenant

Separate database per tenant

Each model can work in production. Each one changes what happens when you need to debug a slow tenant, restore one customer, enforce data isolation, or run migrations.

Model | Data isolation | Operational overhead | Query complexity | Customer-level restore | Migration complexity | Typical fit |

|---|---|---|---|---|---|---|

Shared tables with tenant_id | Low to Medium | Low | Medium | Hard | Low | High-volume SaaS with many small tenants |

Schema per tenant | Medium | Medium | Low to Medium | Moderate | Medium to High | B2B SaaS with moderate tenant count |

Database per tenant | High | High | Low | Easier | High | Enterprise SaaS, strict isolation, regulated customers |

Shared tables with RLS | Medium to High | Medium | Medium | Hard | Medium | Shared-table SaaS needing stronger access control |

The important detail is that these are not only schema choices. They are operating models.

Shared Tables with

tenant_id: Simple Until It Is Not

The shared-table model keeps all tenants in the same tables and adds tenant_id to every tenant-owned row. It is usually the lowest-friction starting point because migrations, connection pooling, monitoring, and application deployment stay simple.

A production-grade shared model must treat tenant_id as part of the data model, not as a filter added by habit.

CREATE TABLE tenants (

id uuid PRIMARY KEY,

name text NOT NULL,

created_at timestamptz NOT NULL DEFAULT now()

);

CREATE TABLE projects (

tenant_id uuid NOT NULL REFERENCES tenants(id),

id uuid NOT NULL,

name text NOT NULL,

created_at timestamptz NOT NULL DEFAULT now(),

PRIMARY KEY (tenant_id, id)

);

CREATE INDEX projects_tenant_created_at_idx

ON projects (tenant_id, created_at DESC);The composite primary key is not cosmetic. It makes tenant scope explicit and reduces the chance of accidental cross-tenant joins. In SaaS systems, globally unique IDs are useful, but tenant-scoped uniqueness is often more useful for constraints, joins, and index design.

A common mistake is creating indexes on business columns without tenant_id:

-- Weak pattern for many SaaS workloads

CREATE INDEX projects_created_at_idx ON projects (created_at DESC);That index may help global admin screens, but most tenant-facing queries filter by tenant first. For ordinary product traffic, indexes should usually start with tenant_id and then follow the query’s sort or lookup pattern.

-- Better for tenant-scoped product traffic

CREATE INDEX projects_tenant_status_created_at_idx

ON projects (tenant_id, status, created_at DESC);Shared tables are efficient when tenants are numerous and individually small. They become harder when tenants vary widely in size, when one customer needs point-in-time recovery, or when enterprise contracts require stronger isolation guarantees.

RLS: A Safety Boundary, Not a Replacement for Design

PostgreSQL Row-Level Security can enforce tenant isolation inside the database. With RLS, a query only sees rows allowed by policies, even if application code forgets a WHERE tenant_id = ... condition.

ALTER TABLE projects ENABLE ROW LEVEL SECURITY;

CREATE POLICY tenant_isolation_projects

ON projects

USING (

tenant_id = current_setting('app.current_tenant')::uuid

)

WITH CHECK (

tenant_id = current_setting('app.current_tenant')::uuid

);The application must set the tenant context for each transaction or request. SET LOCAL is safer than a long-lived session setting because it is scoped to the current transaction.

BEGIN;

SET LOCAL app.current_tenant = '7b7f3f29-84e5-4c95-9357-31a92b9c50b2';

SELECT id, name

FROM projects

ORDER BY created_at DESC

LIMIT 20;

COMMIT;RLS is most useful when it is treated as a second line of defense. Application queries should still be tenant-scoped, indexed, and tested as if RLS did not exist.

RLS can reduce the blast radius of application bugs, but it also adds debugging complexity. Developers need to understand why a query returns no rows under one role and many rows under another. Test suites should include tenant-isolation cases, especially for writes, background jobs, reporting queries, and administrative tools.

Schema per Tenant: Better Boundaries, Higher Migration Cost

With schema-per-tenant, every customer gets a dedicated PostgreSQL schema, such as tenant_123.projects, tenant_123.users, and tenant_123.invoices.

This model improves logical separation. It also makes tenant-specific dumps easier than in a fully shared model. However, migrations become more expensive because every schema must be updated consistently.

A typical request flow changes the search path for the tenant:

BEGIN;

SET LOCAL search_path = tenant_123, public;

SELECT id, name

FROM projects

ORDER BY created_at DESC

LIMIT 20;

COMMIT;This can make application SQL cleaner because table names do not need tenant prefixes. The risk is hidden state. If the wrong search_path is set, the query may hit the wrong tenant’s schema. Connection pooling must be configured carefully so tenant context does not leak between requests.

Schema-per-tenant is often a good middle ground when:

tenants are not extremely numerous

customer-level restore matters

tenant data volume differs significantly

enterprise customers need clearer boundaries

migrations can be automated and monitored per schema

The cost is operational discipline. You need migration tracking, failed-migration recovery, schema naming rules, and a way to run maintenance across many schemas without turning every deployment into a long-running database event.

Database per Tenant: Strong Isolation, Stronger Operations

Database-per-tenant gives each customer its own database. This provides the clearest isolation boundary among the common PostgreSQL SaaS models. It simplifies some customer-level operations, such as backup, restore, data export, and dedicated resource allocation.

It also increases infrastructure work. You need provisioning logic, connection management, monitoring per database, migration orchestration, and a strategy for shared metadata. If the product has thousands of small tenants, this model can become heavy unless automation is mature.

Database-per-tenant is usually justified when tenant isolation is a product requirement, not merely an engineering preference. Typical drivers include enterprise contracts, regulated workloads, customer-specific maintenance windows, dedicated infrastructure tiers, or strict data residency boundaries.

Backup and Restore: The Decision Most Teams Make Too Late

Tenant restore is where multi-tenant architecture becomes operationally real.

In a shared-table model, restoring one customer is hard because the tenant’s rows are mixed with everyone else’s rows. You can export tenant-specific rows, but restoring them safely requires dependency ordering, conflict handling, audit history decisions, and care around deleted or changed records.

For example, a tenant export may look simple:

pg_dump \

--data-only \

--table=projects \

--where="tenant_id = '7b7f3f29-84e5-4c95-9357-31a92b9c50b2'" \

--file=tenant_projects.sql \

app_dbThe problem is that real tenants usually span many tables. Restoring only projects may break foreign keys or miss related records. A reliable shared-table restore strategy usually needs a controlled restore workflow, not just ad hoc dumps.

Schema-per-tenant makes this more direct:

pg_dump \

--format=custom \

--schema=tenant_123 \

--file=tenant_123.dump \

app_db

pg_restore \

--dbname=app_db_restore \

--schema=tenant_123 \

tenant_123.dumpDatabase-per-tenant is the simplest model for customer-level restore because the database boundary already matches the customer boundary. That does not remove the need for testing. It only makes the restore unit clearer.

A practical restore strategy should answer these questions before an incident:

Can we restore one tenant without affecting others?

Can we restore into a temporary environment first?

How do we validate restored data before switching traffic?

What happens to writes made after the backup point?

Are background jobs, webhooks, and integrations paused during restore?

Is the restore process tested, or only documented?

Backups are not complete until restore has been rehearsed.

Performance: Tenant Shape Matters More Than the Model Name

Multi-tenant performance problems usually come from tenant shape: row distribution, query patterns, index design, lock contention, and background jobs. The architecture model influences these problems, but it does not remove them.

In shared tables, large tenants can dominate indexes and cache behavior. A query that is fine for a tenant with 10,000 rows may be slow for a tenant with 50 million rows. Composite indexes, partial indexes for specific high-volume states, and careful pagination become important.

In schema-per-tenant, each tenant has smaller tables, but there are more objects. Planning, migrations, statistics, and maintenance can become more complex as schema count grows.

In database-per-tenant, resource isolation improves, but connection counts, monitoring overhead, and fleet-wide maintenance become the bottleneck. Performance becomes less about SQL alone and more about orchestration.

The safest approach is to monitor by tenant, not only by endpoint or query fingerprint. A SaaS team should be able to identify:

top tenants by storage

top tenants by query time

top tenants by write volume

slow queries grouped by tenant

background jobs grouped by tenant

restore and migration status per tenant

Without tenant-aware observability, the team will only see aggregate symptoms.

A Practical Adoption Path

For many SaaS products, the most realistic path is not to choose the most isolated model on day one. It is to design so that isolation can increase when needed.

A sensible starting point often looks like this:

Use shared tables with mandatory

tenant_idPut

tenant_idinto primary keys, foreign keys, and high-traffic indexes where appropriateAdd RLS for critical tenant-owned tables

Build tenant-aware logging, metrics, and audit trails

Define a tested tenant export and restore process early

Move selected enterprise tenants to schema-per-tenant or database-per-tenant only when business and operational needs justify it

This avoids premature infrastructure complexity while keeping the data model honest.

For engineers who work deeply with PostgreSQL architecture, tenant isolation, query design, and production data operations, the most relevant certification to review is PostgreSQL Architect.

Conclusion

PostgreSQL multi-tenancy is not a single pattern. It is a set of trade-offs between isolation, cost, performance predictability, and operational recovery.

Use shared tables when you need low operational overhead and can enforce tenant boundaries consistently. Use schema-per-tenant when customer-level operations and logical separation matter more. Use database-per-tenant when isolation is part of the product contract. Add RLS when you need database-enforced protection, but do not use it as an excuse for careless query design.

The most expensive mistake is not choosing the “wrong” model. It is choosing a model without a restore strategy, without tenant-aware observability, and without a clear path for the customers who will outgrow the default.