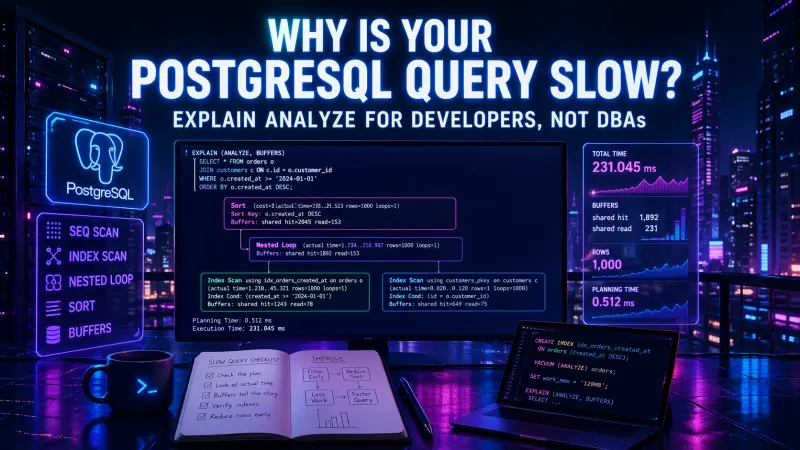

A slow PostgreSQL query usually looks like an application problem first: an endpoint crosses its timeout, a page renders late, a queue worker falls behind, or a report blocks other work. The mistake is to treat EXPLAIN ANALYZE as a specialist-only DBA tool. Developers do not need to tune the whole database to use it well. They need to read enough of the plan to understand where time, I/O, and row growth are coming from.

The useful mindset is simple: PostgreSQL is not “choosing a bad plan” in isolation. It is making a cost-based decision from available indexes, table statistics, predicates, joins, sort requirements, and expected row counts. EXPLAIN ANALYZE lets you compare that expectation with what actually happened.

Start with the query shape, not the database

Consider a common product listing query:

SELECT p.id, p.name, p.price, p.created_at

FROM products p

WHERE p.status = 'active'

AND p.category_id = 42

ORDER BY p.created_at DESC

LIMIT 50;A developer might first ask, “Do we have an index?” That is the right direction, but too broad. PostgreSQL needs an access path that matches the filter and the ordering. An index on category_id alone may reduce some work, but it may still force a sort. An index on created_at alone may help ordering, but it may scan many unrelated rows.

A more useful first check is:

EXPLAIN (ANALYZE, BUFFERS)

SELECT p.id, p.name, p.price, p.created_at

FROM products p

WHERE p.status = 'active'

AND p.category_id = 42

ORDER BY p.created_at DESC

LIMIT 50;ANALYZE runs the query and reports actual timing and rows. BUFFERS shows whether the query is mostly working from memory or reading many pages. This is where the plan becomes operational, not theoretical.

A slow query is rarely slow because of one scary node name. It is slow because a plan reads too much, sorts too much, joins too many rows, or repeats work too many times.

The plan nodes developers should recognize

You do not need to memorize every plan node. For application work, a small set explains most production surprises.

Plan node | What it means | Production signal | Common developer action |

|---|---|---|---|

Seq Scan | Reads the table sequentially | High I/O on large tables, acceptable on small tables | Add or adjust index, narrow predicate, avoid low-selectivity filters |

Index Scan | Uses an index, then visits table rows | Good when result set is selective | Check if it still reads many rows or filters after lookup |

Index Only Scan | Uses index without table visits when visibility allows | Lower heap access, often useful for read-heavy paths | Cover selected columns where justified |

Nested Loop | Runs inner lookup for each outer row | Fast for small outer input, costly when repeated many times | Reduce outer rows, add join indexes, change query shape |

Hash Join | Builds hash table for joining | Often good for larger joins | Watch memory and row estimates |

Sort | Sorts rows after retrieval | Can be expensive before LIMIT | Use index matching ORDER BY, reduce rows before sort |

Bitmap Heap Scan | Combines index hits, then visits heap pages | Often used when many rows match | Check recheck/filter cost and buffer reads |

The node name alone does not tell you whether the plan is bad. A Seq Scan over a tiny lookup table is normal. A Nested Loop over 30 rows can be ideal. A sort of 50 rows is irrelevant. What matters is the relationship between actual rows, loops, buffers, and time.

Read estimates against reality

A typical plan fragment might look like this:

Seq Scan on products p

(cost=0.00..18420.00 rows=120 width=40)

(actual time=0.088..342.517 rows=18400 loops=1)

Filter: ((status = 'active') AND (category_id = 42))

Rows Removed by Filter: 912000

Buffers: shared hit=8120 read=6420The important part is not that PostgreSQL used a Seq Scan. The important part is the mismatch and the work:

estimated rows:

120actual rows:

18400rows removed by filter:

912000buffers read from disk:

6420

This tells you the planner expected a selective predicate but found many more rows. It also tells you the query is scanning a lot of data to return the result. Possible causes include missing indexes, outdated statistics, correlated columns, or a predicate that is not selective enough.

A practical improvement might be:

CREATE INDEX CONCURRENTLY idx_products_category_status_created

ON products (category_id, status, created_at DESC);This index supports the equality filters first, then the requested order. It may let PostgreSQL find the first matching rows in the right order without sorting a large intermediate result.

The exact column order should match your workload. If most queries filter by status alone and category_id is optional, the best index may differ. Indexes speed reads by adding write cost, storage use, and maintenance overhead, so the goal is not “index everything.” The goal is to index the access paths your application actually depends on.

Sequential scan is not always wrong

Developers often see Seq Scan and assume the fix is always an index. That is not true.

PostgreSQL may choose a sequential scan when:

the table is small enough that scanning is cheaper than index navigation

the predicate matches a large portion of the table

the index does not match the filter well

the query must read many columns from many rows anyway

statistics suggest that an index will not reduce work

A common bad pattern is filtering through an expression that prevents a normal index from being useful:

SELECT id, email

FROM users

WHERE lower(email) = lower('alex@example.com');A regular index on email may not help this predicate as written. Better options include normalizing email storage at write time, using a case-insensitive data strategy, or creating an expression index when that query shape is intentional:

CREATE INDEX CONCURRENTLY idx_users_lower_email

ON users (lower(email));The right fix depends on whether this is a core lookup path or an occasional admin search. For a login or account lookup, this query deserves a stable indexed path. For a rare back-office filter, the operational cost of another index may not be justified.

Nested loops are about repetition

A Nested Loop is not automatically bad. It is the natural shape for “for each row from A, find matching rows in B.” With a small outer input and an index on the inner side, it can be very efficient.

It becomes a problem when the outer side is much larger than expected:

Nested Loop

(actual time=1.210..1280.442 rows=25000 loops=1)

-> Index Scan using idx_orders_status on orders o

(actual rows=50000 loops=1)

-> Index Scan using idx_order_items_order_id on order_items i

(actual rows=3 loops=50000)The inner scan is not slow once. It is repeated 50,000 times. Even a cheap lookup becomes expensive when multiplied.

A developer-level fix is usually to reduce the outer rows earlier or change the query so PostgreSQL can aggregate or join more efficiently. For example, avoid pulling a broad order set and joining everything when the endpoint only needs recent orders:

SELECT o.id, o.created_at, count(i.id) AS item_count

FROM orders o

JOIN order_items i ON i.order_id = o.id

WHERE o.customer_id = $1

AND o.created_at >= now() - interval '90 days'

GROUP BY o.id, o.created_at

ORDER BY o.created_at DESC

LIMIT 50;Then support the access pattern:

CREATE INDEX CONCURRENTLY idx_orders_customer_created

ON orders (customer_id, created_at DESC);

CREATE INDEX CONCURRENTLY idx_order_items_order_id

ON order_items (order_id);The important change is not just “add indexes.” It is making the query start from a selective customer and time range before joining item rows.

Sort cost often hides behind LIMIT

LIMIT 50 does not guarantee that PostgreSQL only processes 50 rows. If the database must find all matching rows, sort them, and then take the first 50, the expensive part happens before the limit.

This query can be deceptively costly:

SELECT id, title, published_at

FROM articles

WHERE status = 'published'

ORDER BY published_at DESC

LIMIT 20;Without a useful index, the plan may scan many published rows, sort them, and return 20. A better index can let the database walk rows in the required order:

CREATE INDEX CONCURRENTLY idx_articles_status_published_at

ON articles (status, published_at DESC);For feeds, dashboards, timelines, and admin lists, this is one of the highest-value optimizations: match filtering and ordering in the same index where the workload is frequent enough to justify it.

Buffers tell you whether the query is I/O-heavy

Timing alone can mislead you. A query may be fast in development because everything is cached, then slow in production under memory pressure. BUFFERS adds context:

Buffers: shared hit=204 read=18950 dirtied=0A high read count means PostgreSQL had to read pages from storage. A high hit count means pages were found in shared buffers. Neither number is “bad” by itself, but the ratio helps you understand whether the query is CPU-heavy, memory-resident, or I/O-heavy.

For developers, buffer output is useful because it changes the conversation. Instead of saying “the database is slow,” you can say:

this query reads too many table pages

this index still causes many heap visits

this sort happens after a large scan

this endpoint depends on cached data to stay fast

this report should run asynchronously or against a read replica

That is a better engineering discussion than arguing over milliseconds from a single run.

Practical workflow for fixing a slow query

Use a repeatable process rather than guessing.

Capture the exact SQL generated by the application, including bound values.

Run

EXPLAIN (ANALYZE, BUFFERS)in an environment with realistic data.Find the node with the largest actual time, row count expansion, repeated loops, or buffer reads.

Compare estimated rows with actual rows.

Decide whether the problem is access, filtering, joining, sorting, or returning too much data.

Change one thing: query shape, index, predicate, selected columns, pagination model, or aggregation.

Re-run the plan and compare the operational signals, not only the total runtime.

For ORM-heavy applications, also inspect whether the slow SQL is actually one query or an N+1 pattern. EXPLAIN ANALYZE helps with one SQL statement, but it will not tell you that your endpoint executed 300 similar queries unless you inspect application-level query logs.

Fix the query before tuning the server

Server-level settings matter, but they are rarely the first fix for an application query that scans too much or joins poorly. Increasing memory for sorts will not help if the query should not be sorting 500,000 rows in the first place. Faster disks will not solve a predicate that forces full-table reads on every request. More CPU will not remove an accidental N+1 query pattern.

Good PostgreSQL performance work starts with the contract between application code and data model:

Does the query express the smallest useful result?

Are filters selective enough?

Does the index match the predicate and ordering?

Are joins starting from the right side?

Is pagination avoiding large offsets on hot paths?

Is the endpoint asking for columns it does not need?

If PostgreSQL query analysis is part of your day-to-day backend or architecture work, the most relevant certification to review is PostgreSQL Architect, especially if you are expected to reason about query plans, schema design, and production data behavior.

Conclusion

EXPLAIN ANALYZE is not a magic performance command, and it is not only for DBAs. It is a way for developers to connect application behavior with database execution. The practical skill is knowing which parts of the plan matter: actual rows versus estimates, loops, sort placement, access method, and buffers.

When a PostgreSQL query is slow, do not start by blaming the planner or adding random indexes. Start by reading what the database actually did. Then make the smallest change that reduces work: fewer rows scanned, fewer loops repeated, fewer pages read, fewer rows sorted, or a better index for the query shape. That is how query optimization becomes an engineering habit rather than a production emergency.