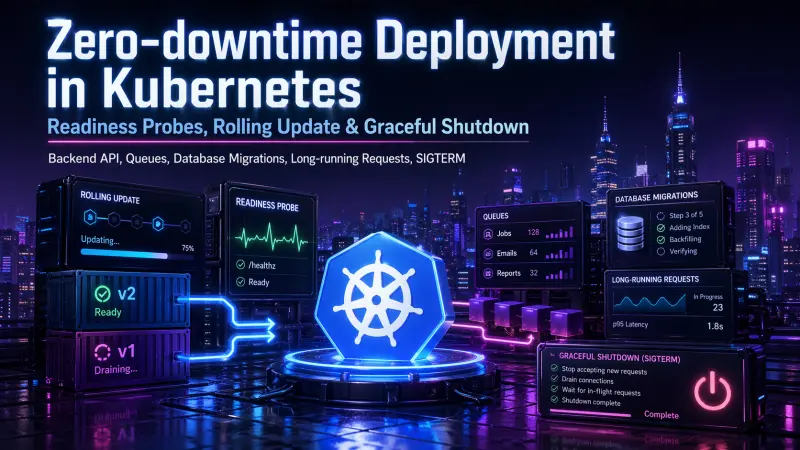

Zero-downtime deployment in Kubernetes is often treated as a deployment strategy problem: set strategy: RollingUpdate, add a readiness probe, and the platform will handle the rest. In real backend systems, that is not enough. The difficult part is not starting a new pod. The difficult part is stopping the old one without dropping requests, duplicating jobs, blocking database writes, or routing traffic to an application that is technically running but not ready.

For a production backend API, deployment safety depends on several moving parts: readiness probes, rolling update limits, graceful shutdown, queue worker behavior, database migration strategy, and how the application reacts to SIGTERM. Kubernetes can coordinate traffic and pod lifecycle, but it cannot infer your application’s business rules. Your service must participate.

What “zero downtime” actually means

Zero downtime does not mean no pod ever exits. It means users and dependent systems do not observe an outage during deployment. For an API, that usually means:

Existing requests are allowed to complete where practical.

New traffic stops reaching pods that are shutting down.

New pods receive traffic only after the application is ready.

Queue jobs are not interrupted in unsafe ways.

Database changes remain compatible with both old and new code.

Load balancers, ingress controllers, and service endpoints converge without a visible gap.

This is why “zero downtime” is less about one Kubernetes feature and more about lifecycle alignment.

Kubernetes can remove a pod from service endpoints, but only the application can decide when it is safe to stop accepting work.

The common failure mode: alive is not ready

A frequent mistake is using a simple health endpoint for everything. The app returns 200 OK, so the pod is considered ready. But a backend service can be alive while still being unable to serve production traffic.

For example, the process may be running while:

The database connection pool is not initialized.

Required configuration is missing.

A cache warm-up is still in progress.

Background consumers have not started correctly.

The service is draining and should not receive new requests.

Kubernetes separates this into different concerns:

Probe | What it should answer | Traffic impact | Typical failure consequence |

|---|---|---|---|

startupProbe | Has the application finished booting? | Delays liveness and readiness checks | Prevents slow-starting apps from being killed too early |

readinessProbe | Can this pod receive traffic now? | Removes pod from service endpoints when failing | Stops new requests from reaching unavailable or draining pods |

livenessProbe | Is the process stuck beyond recovery? | Restarts the container when failing | Recovers from deadlocks or broken process state |

A production API should not rely on livenessProbe to control traffic. Use readinessProbe for traffic eligibility and reserve livenessProbe for cases where restart is the correct recovery action.

A deployment spec that supports draining

A practical Kubernetes deployment starts by making rollout behavior explicit. The goal is to avoid taking down too many old pods before new pods are actually ready.

apiVersion: apps/v1

kind: Deployment

metadata:

name: backend-api

spec:

replicas: 6

strategy:

type: RollingUpdate

rollingUpdate:

maxUnavailable: 0

maxSurge: 1

minReadySeconds: 10

selector:

matchLabels:

app: backend-api

template:

metadata:

labels:

app: backend-api

spec:

terminationGracePeriodSeconds: 60

containers:

- name: api

image: registry.example/backend-api:2026-04-22

ports:

- containerPort: 8080

readinessProbe:

httpGet:

path: /health/ready

port: 8080

periodSeconds: 5

failureThreshold: 1

livenessProbe:

httpGet:

path: /health/live

port: 8080

periodSeconds: 10

failureThreshold: 3

lifecycle:

preStop:

exec:

command: ["/bin/sh", "-c", "sleep 10"]Several details matter here.

maxUnavailable: 0 tells Kubernetes not to reduce available capacity during rollout. maxSurge: 1 allows one extra pod above the desired replica count, so a new pod can become ready before an old pod is removed. This is usually safer for APIs than replacing pods one-for-one under load.

terminationGracePeriodSeconds gives the application time to finish in-flight work after receiving SIGTERM. It should be longer than the normal upper bound of request duration or job checkpoint interval, but not so high that broken pods remain around indefinitely.

The preStop sleep is not a replacement for graceful shutdown. It gives service endpoint updates and external load balancers a short buffer before the process exits. The application still needs to stop accepting new work and finish active work properly.

Readiness must change during shutdown

A pod that receives SIGTERM should usually become not ready before it exits. This prevents new traffic from being routed to a process that is already draining.

A minimal Node.js API pattern looks like this:

import http from "node:http";

let ready = false;

let shuttingDown = false;

let activeRequests = 0;

const server = http.createServer(async (req, res) => {

if (req.url === "/health/live") {

res.writeHead(200);

return res.end("ok");

}

if (req.url === "/health/ready") {

const status = ready && !shuttingDown ? 200 : 503;

res.writeHead(status);

return res.end(status === 200 ? "ready" : "not ready");

}

activeRequests++;

try {

await handleRequest(req, res);

} finally {

activeRequests--;

}

});

async function shutdown() {

shuttingDown = true;

server.close(() => {

process.exit(0);

});

setTimeout(() => {

process.exit(1);

}, 55_000);

}

process.on("SIGTERM", shutdown);

await initializeDatabasePool();

await warmRequiredState();

ready = true;

server.listen(8080);The important behavior is not specific to Node.js. The same principle applies in PHP, Go, Java, Python, or any backend stack:

Expose readiness based on real traffic eligibility.

Flip readiness to false when shutdown starts.

Stop accepting new connections.

Let active requests complete within a bounded window.

Exit before Kubernetes sends

SIGKILL.

If your framework or server already handles parts of this lifecycle, use it. But verify the actual behavior under deployment, not just under local process termination.

Long requests need explicit policy

Long-running requests are where zero-downtime assumptions often break. A 30-second report generation endpoint, file upload, export, or payment callback may outlive the default expectations of a rolling update.

There are only a few defensible options:

Request type | Preferred deployment behavior | Operational requirement |

|---|---|---|

Short API call | Finish during grace period | Request timeout below termination grace period |

Long read operation | Allow completion or move to async job | Clear timeout and cancellation policy |

File upload | Avoid mid-stream termination | Ingress, app, and pod timeouts aligned |

Payment or webhook callback | Make handler idempotent | Safe retry handling and durable state |

Export or report generation | Move work to queue | Client polls or receives completion notification |

Trying to make every request survive indefinitely is usually the wrong target. A better design is to keep synchronous HTTP work bounded and move long work to queues with explicit retry and idempotency.

Queue workers are not API pods

Backend systems often deploy API servers and queue workers from the same image. That is fine. Treating them as the same lifecycle is not.

An API pod must stop accepting new HTTP traffic. A worker must stop fetching new jobs, finish or safely release the current job, then exit. If a worker dies mid-job, the queue system must either retry safely or preserve enough state to resume.

A worker shutdown loop can be simple:

#!/usr/bin/env bash

set -euo pipefail

term_received=false

trap 'term_received=true' TERM INT

while true; do

if [ "$term_received" = true ]; then

echo "Shutdown requested, not fetching new jobs"

exit 0

fi

php artisan queue:work \

--once \

--timeout=45 \

--tries=3

sleep 1

doneThis pattern is intentionally conservative. The worker processes one job at a time, checks whether shutdown was requested, and avoids fetching another job after SIGTERM. In real systems, the exact command differs, but the lifecycle requirement is the same: do not start new work when the pod is already terminating.

For queue-heavy services, API and worker deployments should often be separated:

Deployment | Scaling signal | Shutdown concern | Rollout risk |

|---|---|---|---|

API pods | Request rate, latency, CPU | In-flight HTTP requests | Dropped traffic or failed requests |

Worker pods | Queue depth, job duration, CPU | Active job completion | Duplicate jobs or lost progress |

Scheduler pods | Time-based triggers | Avoid duplicate scheduling | Multiple pods triggering same task |

Migration job | Schema version | Compatibility with live code | Breaking old or new release |

Database migrations are the hidden downtime source

Many Kubernetes rollouts fail because the application deployment is safe, but the database migration is not. Rolling updates mean old and new application versions may run at the same time. Your schema must support that overlap.

The safe pattern is expand, deploy, contract:

Expand the schema with backward-compatible changes.

Deploy application code that can work with both old and new schema state.

Backfill data if needed.

Switch reads or writes to the new path.

Remove old columns, indexes, or code only after all old versions are gone.

For example, adding a nullable column is usually easier to roll out than renaming a column used by live code. A safer migration might look like this:

ALTER TABLE orders

ADD COLUMN external_reference text;

CREATE INDEX CONCURRENTLY idx_orders_external_reference

ON orders (external_reference);The exact SQL depends on the database, but the principle is stable: avoid schema changes that instantly break the currently running version. Destructive migrations should be delayed until the system no longer depends on the old structure.

This is especially important with maxSurge, because during a rollout your old and new pods intentionally coexist. That coexistence is what preserves availability. The database must be compatible with it.

Rolling update is not enough without capacity

maxUnavailable: 0 helps only if the cluster can schedule surge pods. If the cluster is already at its CPU, memory, pod, or connection limit, Kubernetes may not be able to create the extra pod before terminating old capacity.

Before assuming a rollout is safe, check the operational constraints:

Is there enough node capacity for

maxSurge?Are pod disruption budgets aligned with deployment strategy?

Can the database handle temporary extra connections?

Are connection pools sized per pod, not just globally?

Does autoscaling react fast enough for rollout traffic?

Do ingress and load balancer timeouts exceed normal request duration?

Are readiness failures visible in metrics and alerts?

A rollout can be correct in YAML and still fail because the surrounding system has no spare capacity.

What to adopt first

A practical adoption sequence is better than trying to fix every lifecycle edge case at once.

Start with the API lifecycle:

Add real

readinessProbeandlivenessProbeendpoints.Make readiness fail during shutdown.

Handle

SIGTERMin the application.Set

terminationGracePeriodSecondsbased on observed request duration.Use

maxUnavailable: 0for critical API deployments.Test rollout under traffic, not just in an idle environment.

Then separate worker behavior:

Run workers in their own deployment.

Stop fetching jobs after shutdown starts.

Make job handlers idempotent.

Align job timeout, retry visibility timeout, and termination grace period.

Avoid running migrations inside every application pod startup.

Finally, clean up database delivery:

Use backward-compatible migrations.

Avoid destructive schema changes in the same release as dependent code changes.

Treat long backfills as operational jobs, not as blocking deploy steps.

Keep old and new application versions compatible during rollout overlap.

Test the lifecycle, not only the code

Unit tests will not prove zero-downtime deployment. You need deployment tests that exercise the lifecycle under realistic conditions.

A useful test plan includes:

Send steady traffic while running

kubectl rollout restart deployment/backend-api.Include slow requests that approach normal timeout limits.

Verify no new traffic reaches pods after readiness fails.

Confirm

SIGTERMis logged and handled.Check that queue workers do not start new jobs after termination begins.

Run migrations in the same order used by production delivery.

Watch p95 and p99 latency, error rate, queue retries, and database connection count during rollout.

The goal is not to prove that failure is impossible. The goal is to make shutdown behavior predictable enough that deployment is a routine operation rather than a production gamble.

For engineers who work with Kubernetes delivery, probes, rollouts, and workload lifecycle in production, the most relevant certification to review is Kubernetes Specialist.

Conclusion

Zero-downtime deployment in Kubernetes is not achieved by enabling rolling updates alone. Rolling updates create the opportunity for safe replacement. Readiness probes decide when traffic should flow. Graceful shutdown decides what happens to work already in progress. Database migrations decide whether old and new versions can coexist.

For backend APIs, queues, migrations, long requests, and SIGTERM, the reliable approach is explicit lifecycle design. Make readiness truthful, make shutdown bounded, make jobs idempotent, make migrations backward-compatible, and test deployment under real traffic. That is what turns Kubernetes from a restart mechanism into a dependable delivery platform.